Address

7 Bell Yard, London, WC2A 2JR

Work Hours

Monday to Friday: 8AM - 6PM

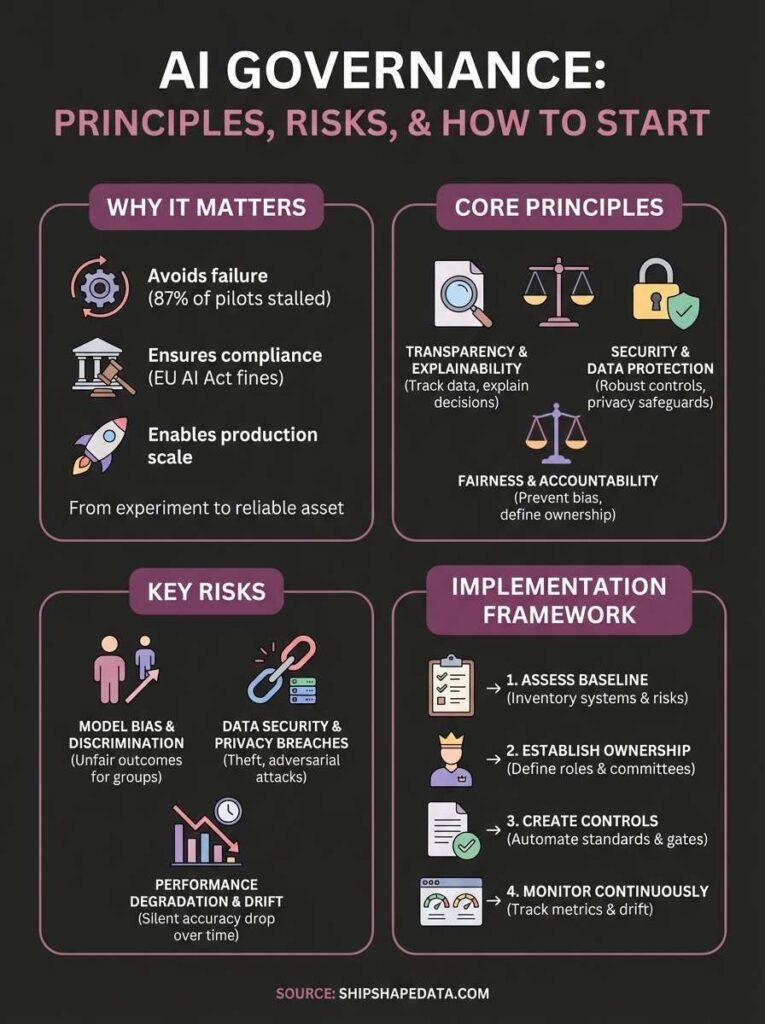

AI governance is the set of policies, processes, and controls that guide how your organisation develops, deploys, and monitors AI systems. It ensures your AI operates safely, ethically, and within legal boundaries while protecting against risks like bias, data breaches, and regulatory penalties. Without proper governance, AI projects often fail to move from pilot to production or create unintended harm.

This article explains the principles that underpin effective AI governance, the key risks you need to address, and the frameworks regulators expect you to follow. You’ll learn how to build an operating model that works for your organisation, what tools help you monitor AI systems in production, and how to start implementing governance that supports AI success rather than slowing it down. Whether you’re launching your first AI initiative or scaling existing projects, understanding governance is essential for turning AI from an experiment into a reliable business asset.

Your organisation faces mounting pressure to deploy AI systems that deliver tangible business value, but 87% of AI projects fail to reach production. The gap between experimental pilots and operational systems stems from weak foundations: unreliable data, unclear accountability, and absent controls. Without governance, your AI initiatives expose you to legal penalties, reputational harm, and wasted investment while competitors establish AI as a sustainable competitive advantage.

Regulators now enforce strict requirements for AI transparency, fairness, and data protection. The EU AI Act imposes fines up to €35 million or 7% of global turnover for high-risk AI violations, while the UK’s emerging framework requires organisations to demonstrate accountability throughout the AI lifecycle. Your teams need documented processes, bias monitoring, and impact assessments to satisfy these standards before incidents occur.

Beyond compliance, poor AI governance creates public relations crises that damage customer trust and brand value. An AI system that discriminates in hiring, denies legitimate insurance claims, or leaks sensitive data generates immediate media scrutiny and long-term market skepticism. Governance frameworks prevent these outcomes by establishing testing protocols, human oversight mechanisms, and incident response procedures before AI systems interact with customers or make consequential decisions.

Governance transforms AI from a laboratory experiment into a reliable business tool by addressing operational readiness gaps. Your data science teams might build accurate models, but production requires data quality controls, version management, and performance monitoring that only governance structures provide. Without these elements, AI systems degrade over time, produce inconsistent outputs, or break when data patterns shift.

Effective governance accelerates deployment by clarifying who owns decisions, what standards systems must meet, and how you will measure success throughout the AI lifecycle.

When you establish governance early, you create repeatable processes that scale across multiple AI initiatives. Teams gain clear guidelines for data handling, model approval thresholds, and deployment gates that reduce bottlenecks and prevent costly rework. This systematic approach helps your organisation extract consistent value from AI investments rather than treating each project as a unique experiment that may or may not succeed.

Implementing AI governance requires a structured approach that balances control with speed. Your organisation needs practical processes that guide AI development without creating bureaucratic bottlenecks that stall valuable projects. Start by assessing your current AI maturity, then build governance incrementally around the systems you already have in production or plan to deploy soon. This focused approach delivers immediate risk reduction while establishing patterns you can scale across future initiatives.

Your first step involves mapping what AI systems currently operate within your organisation and identifying governance gaps. Conduct an inventory of all AI applications, models, and data sources that feed these systems, regardless of whether teams officially classify them as AI projects. Many organisations discover shadow AI initiatives where departments deploy machine learning tools without central oversight or risk evaluation.

During this assessment, document where each AI system sits in its lifecycle (development, testing, production, retired), what data it processes, and what business decisions it influences. You should also identify existing policies that partially cover AI activities, such as data protection standards or software deployment procedures. This baseline reveals which areas require immediate attention and where you can leverage existing governance structures rather than building from scratch.

Understanding what AI governance means in practice starts with knowing what you’re actually governing and where your biggest risks currently exist.

Effective governance fails without clear accountability for AI-related decisions and outcomes. Designate specific individuals or teams responsible for approving AI projects, monitoring production systems, and responding when issues arise. Your governance structure should specify who makes technical decisions versus business decisions, and at what point senior leadership approval becomes necessary.

Create a cross-functional AI governance committee that includes representatives from data science, legal, information security, and relevant business units. This committee reviews proposed AI use cases, assesses risk levels, and ensures projects align with your organisation’s ethical standards and compliance requirements. Define the scope of this committee’s authority clearly so teams understand which decisions they can make independently versus which require formal review.

Your teams need written guidelines that translate governance principles into practical requirements. Develop standards for data quality, model testing, documentation, and deployment approval that reflect both regulatory expectations and your organisation’s risk tolerance. These standards should cover technical aspects like performance thresholds and bias testing alongside operational concerns like change management and rollback procedures.

Build controls into your AI development workflow rather than treating governance as a separate activity. Implement automated checks for data quality issues, mandatory documentation templates, and staged deployment gates that prevent untested systems from reaching production. Your controls should make compliance the path of least resistance while still allowing experienced teams to move quickly on low-risk applications.

Effective AI governance rests on principles that ensure your AI systems serve business objectives while protecting stakeholders from harm. These principles define what AI governance means in practice and provide the foundation for policies, technical controls, and decision-making frameworks. Your governance approach should embed these principles throughout the AI lifecycle rather than treating them as compliance checkboxes to complete after development finishes.

Your organisation must maintain clear documentation of how AI systems make decisions and what data they use to reach conclusions. This principle requires you to track data lineage, record model architecture choices, and preserve the logic behind threshold settings or classification rules. When your AI system denies a loan application or flags a transaction as fraudulent, affected individuals deserve understandable explanations rather than references to opaque algorithms.

Transparency extends beyond technical documentation to include communication about AI capabilities and limitations with users and stakeholders. You should disclose when AI systems support human decisions versus making autonomous choices, and provide information about accuracy rates, known failure modes, and circumstances where human review occurs. This openness builds trust while managing expectations about what your AI can reliably deliver.

Your AI governance framework must prevent systems from producing discriminatory outcomes or perpetuating historical biases present in training data. This principle demands rigorous testing across demographic groups, regular bias audits during production, and correction mechanisms when fairness issues emerge. You need defined processes for measuring fairness based on your specific use case, whether that means demographic parity, equal opportunity, or another appropriate metric.

Accountability requires clear ownership of AI outcomes, with named individuals responsible for monitoring performance and addressing issues before they escalate into crises.

Establishing accountability means defining roles for who approves AI deployments, monitors ongoing performance, and responds to incidents. Your governance structure should specify escalation paths when AI systems behave unexpectedly and outline remediation procedures that balance speed with thoroughness. Without accountability, AI initiatives create diffuse responsibility where no one owns the consequences of automated decisions.

Your AI systems process sensitive information that requires robust security controls and privacy safeguards aligned with data protection regulations. This principle covers technical measures like encryption, access controls, and secure model storage alongside procedural requirements for data minimization and retention policies. You must protect training data, production models, and inference outputs from unauthorized access while ensuring compliance with GDPR, UK data protection standards, or sector-specific regulations.

Security considerations include defending against adversarial attacks that manipulate AI outputs through poisoned training data or crafted inputs designed to exploit model weaknesses. Your governance approach should incorporate threat modeling specific to AI systems, vulnerability assessments for deployed models, and incident response plans that account for AI-specific attack vectors beyond traditional cybersecurity concerns.

Understanding what AI governance means requires recognising the specific threats AI systems introduce to your organisation. These risks differ from traditional software failures because AI models make probabilistic decisions based on patterns in data rather than following explicit rules. Your governance framework must address these AI-specific vulnerabilities while maintaining operational efficiency and supporting innovation. Without targeted risk management, you expose your business to financial losses, legal liability, and operational disruptions that undermine confidence in AI initiatives.

Your AI systems can perpetuate or amplify societal biases present in training data, leading to discriminatory outcomes against protected groups. A recruitment model trained on historical hiring data might learn to favour candidates from certain demographics because past human decisions reflected unconscious bias. Similarly, credit scoring algorithms can deny loans to qualified applicants from underrepresented communities if training data contains patterns linked to demographic factors rather than creditworthiness.

Bias manifests in subtle ways that standard testing misses. Your model might achieve high overall accuracy while performing significantly worse for specific subgroups, creating fairness issues that regulatory audits will identify. Detection requires disaggregated performance analysis across demographic categories and continuous monitoring as your AI processes new populations or enters different markets.

Your AI models hold valuable intellectual property while processing sensitive customer information, making them attractive targets for adversaries seeking competitive advantage or personal data. Attackers can extract training data from deployed models through inference attacks, reverse-engineer proprietary algorithms, or manipulate inputs to cause incorrect predictions that serve malicious purposes. These threats extend beyond traditional cybersecurity concerns to include AI-specific vulnerabilities like model poisoning and adversarial examples.

Data governance failures in AI systems create cascading risks, from regulatory penalties under GDPR to reputational damage that erodes customer trust and market position.

Privacy risks intensify when your AI combines multiple data sources or infers sensitive attributes from seemingly innocuous inputs. Models can learn to predict protected characteristics like health conditions or financial status from proxy variables, creating unexpected compliance violations. Your governance approach must enforce data minimisation, establish retention policies, and implement technical controls that prevent unauthorised access to both training datasets and production models.

Your AI models lose accuracy over time as real-world conditions diverge from training data patterns, a phenomenon called model drift that governance processes must detect and address. External factors like economic shifts, competitor actions, or regulatory changes alter the relationships your model learned, causing predictions to become unreliable without triggering obvious failures. This silent degradation erodes business value while potentially creating incorrect decisions that harm customers or violate operational standards.

Monitoring drift requires establishing performance baselines and implementing automated alerts when accuracy drops below acceptable thresholds. Your governance framework should define retraining triggers, approval gates for deploying updated models, and rollback procedures when new versions perform worse than predecessors.

Your AI governance approach must align with regulatory frameworks that establish legal requirements and industry expectations for AI development and deployment. These frameworks range from binding legislation with substantial penalties to voluntary standards that shape best practices across sectors. Understanding what AI governance means in regulatory terms helps you avoid compliance violations while building systems that meet stakeholder expectations for transparency, fairness, and accountability.

The EU AI Act represents the most comprehensive AI legislation globally, imposing a risk-based framework that categorises AI systems according to their potential harm. High-risk applications in areas like employment, credit scoring, and law enforcement face strict requirements for human oversight, documentation, and testing before deployment. Your organisation must conduct conformity assessments, maintain detailed technical documentation, and implement post-market monitoring if you operate AI systems that fall under high-risk categories within EU member states.

UK regulators take a sector-specific approach where existing bodies like the ICO, FCA, and Ofcom apply AI governance principles within their respective domains. This framework emphasises five principles: safety, transparency, fairness, accountability, and contestability. You need to demonstrate how your AI systems comply with sector regulations while adhering to data protection standards under UK GDPR and preparing for potential future legislation as the regulatory landscape matures.

Compliance failures carry severe financial consequences, with EU AI Act penalties reaching €35 million or 7% of global annual turnover for serious violations, making governance a business necessity rather than an optional safeguard.

The NIST AI Risk Management Framework provides practical guidance for organisations building governance processes without prescribing specific technical solutions. This voluntary standard helps you structure risk assessments, establish controls throughout the AI lifecycle, and document decisions in formats that satisfy regulatory expectations. Adopting NIST principles demonstrates your commitment to responsible AI even where mandatory regulations remain under development.

OECD AI Principles offer internationally recognised standards that emphasise transparency, human-centred values, and accountability across AI development. These principles inform regulatory approaches in multiple jurisdictions and provide a foundation for governance policies that work across geographic markets. Your framework should reference these standards when defining ethical requirements and establishing review criteria for proposed AI applications.

Your compliance strategy requires monitoring regulatory developments across jurisdictions where you operate while implementing flexible governance structures that accommodate evolving requirements. Establish processes that exceed minimum standards in areas like bias testing, documentation, and impact assessments so you maintain compliance as regulations tighten. This proactive approach reduces the cost and disruption of adapting to new mandates while positioning your organisation as a trusted AI provider in regulated markets.

Your AI governance structure needs clearly defined roles and an operating model that distributes authority appropriately across your organisation. Successful governance balances centralised oversight with decentralised execution, allowing technical teams to move quickly while maintaining necessary controls. You should establish formal responsibilities that span from executive leadership through operational teams, ensuring no gaps exist in accountability when AI systems require decisions or interventions.

Your C-suite must take direct ownership of AI governance rather than delegating responsibility entirely to technical functions. Designate a specific executive, often the CTO or Chief Data Officer, as the accountable leader who reports AI governance status to the board and allocates resources for compliance activities. This executive sets the risk appetite for AI initiatives, approves high-risk deployments, and champions governance requirements across business units that might view controls as obstacles to innovation.

Board-level engagement provides legitimacy and resources that governance teams need to enforce standards effectively. Your executive owner should present regular updates on AI risks, regulatory developments, and governance maturity to board members, ensuring strategic alignment between business objectives and responsible AI practices.

Without executive ownership, your governance framework becomes a policy document that teams ignore when deadlines pressure them to bypass controls.

Your organisation requires a standing committee that brings together expertise from data science, legal, information security, compliance, and relevant business functions. This committee reviews proposed AI use cases, assesses risk classifications, and approves or rejects deployments based on governance standards you established. Structure meetings with clear agendas, decision criteria, and documented outcomes so teams understand why applications received approval or require modifications.

Committee composition should reflect the scope of AI applications you deploy. Manufacturing organisations include operational technology specialists, while financial services firms need risk management and regulatory compliance experts. Your committee chair, typically a senior technical leader, maintains authority to expedite low-risk approvals between meetings while escalating contentious decisions to the full group.

Your governance framework functions through individuals who perform specific tasks within AI development workflows. Model owners take responsibility for specific AI systems throughout their lifecycle, monitoring performance, investigating anomalies, and coordinating retraining activities. Data stewards ensure training datasets meet quality standards and comply with usage restrictions, while AI ethics reviewers evaluate fairness implications before models enter production.

Technical teams need embedded governance advocates who understand both engineering requirements and policy constraints. These individuals translate governance principles into practical implementation guidance, review code for compliance issues, and identify when projects require escalation to the governance committee. Your operating model should clarify reporting lines, decision authority, and escalation thresholds so operational staff know when they can proceed independently versus when they need formal approval.

Your AI governance framework requires concrete mechanisms that track system performance and detect issues before they escalate into failures. Monitoring tools transform what AI governance means from abstract policy into measurable oversight that reveals when models deviate from expected behaviour. You need both technical metrics that assess AI accuracy and operational controls that demonstrate compliance with your governance standards. Without systematic monitoring, your organisation operates blindly, discovering problems only after they harm customers or trigger regulatory investigations.

Your monitoring approach must measure how accurately AI systems perform their intended tasks across different conditions. Track fundamental metrics like precision, recall, and F1 scores that reveal whether your model correctly identifies positive cases while minimising false positives. These technical measurements provide early warning when accuracy drops below thresholds you defined during model approval, signalling the need for investigation or retraining.

Beyond overall accuracy, you should monitor performance across demographic subgroups to detect fairness issues that aggregate statistics might mask. Your systems need to alert you when accuracy for specific populations falls below parity thresholds, indicating potential bias that requires immediate attention. Implement dashboards that visualise these metrics for stakeholders who make deployment decisions, ensuring technical teams and governance committees share a common understanding of system health.

Your AI systems depend on high-quality input data that matches the patterns models learned during training. Deploy automated checks that flag anomalies in data distributions, missing values, or schema violations before corrupted inputs reach production models. These quality gates prevent garbage data from generating unreliable predictions that undermine business processes or customer experiences.

Detecting drift early prevents the silent degradation that occurs when real-world conditions diverge from training assumptions, protecting your organisation from decisions based on outdated models.

Monitor statistical properties of incoming data to identify concept drift where the relationships between features and outcomes change over time. Your tools should compare current data distributions against baseline profiles established during training, triggering alerts when divergence exceeds acceptable limits. This proactive detection gives you time to retrain models before performance deteriorates to unacceptable levels.

Your governance structure requires comprehensive logging that documents who accessed AI systems, what decisions they made, and when interventions occurred. Maintain audit trails that record model versions in production, changes to configuration parameters, and human overrides of automated recommendations. These records prove essential when regulators request evidence of your governance processes or when investigating incidents that require root cause analysis.

Build reporting capabilities that aggregate monitoring data into compliance-focused summaries your governance committee and executives need for oversight. Your reports should highlight trends in model performance, summarise bias testing results, and document remediation actions taken when issues emerged.

Your governance framework transitions from theory to practice when you embed controls directly into the workflows your teams use to build and deploy AI systems. Effective enterprise governance functions as integrated guardrails rather than separate approval layers that slow development velocity. You achieve this by automating compliance checks, building governance requirements into development tools, and creating clear pathways for different risk levels that let low-risk applications move faster than high-risk deployments. Understanding what AI governance means operationally requires examining how organisations apply these principles to specific AI implementations.

Your development pipeline should enforce governance requirements automatically at each stage rather than relying on manual reviews. Implement pre-deployment gates that check for bias metrics, data quality thresholds, and documentation completeness before models can advance to production environments. These automated controls prevent teams from accidentally deploying systems that violate your governance standards while eliminating bottlenecks caused by waiting for committee reviews.

Build governance checks into your version control and continuous integration processes so every model change triggers compliance validation. Your pipeline might run fairness tests across demographic groups, verify that training data meets retention policies, and confirm that model documentation includes required explanations of decision logic. Teams receive immediate feedback when their work fails governance criteria, allowing them to address issues during development rather than discovering problems after deployment.

Automated governance in your development pipeline transforms compliance from a bureaucratic hurdle into a quality assurance mechanism that improves AI reliability while accelerating safe deployments.

Your customer-facing AI systems require heightened governance controls because failures directly impact user experiences and regulatory exposure. Deploy human-in-the-loop mechanisms for high-stakes decisions where AI recommendations support rather than replace human judgment, particularly in applications that affect employment, credit, or healthcare. Your governance approach should specify when human oversight becomes mandatory based on decision impact and confidence thresholds.

Implement real-time monitoring that tracks customer interactions with AI systems to identify problematic patterns before they escalate. Your monitoring should flag unusual prediction distributions, detect when users repeatedly reject AI recommendations, and alert you to potential bias issues emerging in production. This operational visibility lets you intervene quickly when AI behaviour deviates from expectations.

Your governance structure must accommodate organisations running dozens of AI projects simultaneously without creating administrative overhead that kills innovation. Establish risk-based approval tiers where low-risk applications receive expedited reviews while high-risk systems undergo comprehensive assessment. This tiered approach concentrates governance resources on initiatives that carry the greatest potential for harm.

Create reusable governance templates and playbooks that project teams can adapt to their specific use cases rather than building governance processes from scratch for each initiative. Your templates should include standard risk assessment questionnaires, bias testing protocols, and deployment checklists that capture institutional knowledge while maintaining consistency across projects. This standardisation reduces the burden on your governance committee while ensuring comprehensive coverage of regulatory requirements.

Your AI governance framework determines whether your AI initiatives deliver consistent business value or remain experimental pilots that never reach production. This article has explained what AI governance means in practical terms: the policies, processes, and controls that ensure your AI systems operate safely, ethically, and within regulatory boundaries. You now understand the core principles that underpin effective governance, the specific risks you must address, and the frameworks regulators expect you to follow.

Implementing governance requires clear ownership, documented standards, and monitoring tools that detect issues before they escalate into costly failures. Your organisation benefits most when you embed governance into development workflows rather than treating it as a separate compliance exercise that slows progress. Strong foundations in data quality, model testing, and accountability mechanisms separate AI systems that scale successfully from those that fail to move beyond initial experiments.

Building production-ready AI systems requires both technical expertise and governance frameworks that support reliable, long-term success across your enterprise.