Address

7 Bell Yard, London, WC2A 2JR

Work Hours

Monday to Friday: 8AM - 6PM

Predictive analytics uses historical data, statistical algorithms, and machine learning to forecast what might happen next. Rather than just reporting what occurred last quarter, it answers questions like “Which customers are likely to churn?” or “When will this equipment need…

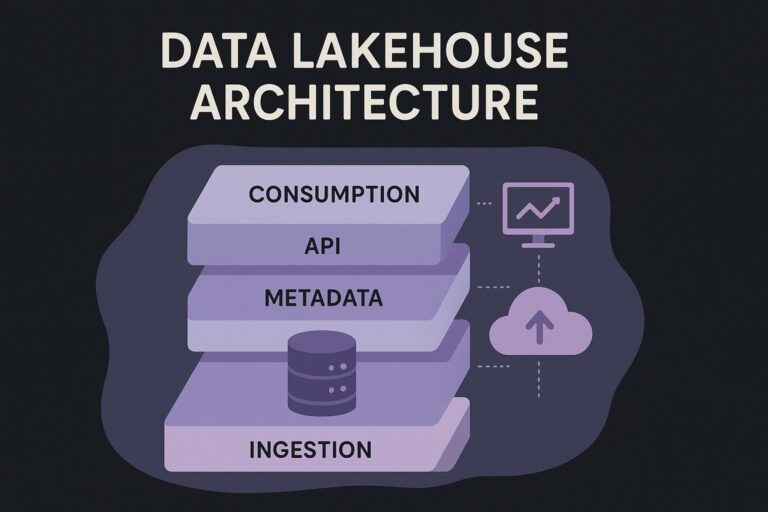

Unify your data with data lakehouse architecture. Explore its definition, core layers, and practical diagrams to gain faster insights and reduce costs.

A vector database is a system designed to store, manage, and search data that has been converted into numerical representations called vectors.

Retrieval-Augmented Generation (RAG) is an AI architecture that enhances large language models by connecting them to live data sources.

Responsible AI refers to the practice of designing, deploying, and managing artificial intelligence systems in a way that is ethical, transparent, accountable, and aligned with human values.

Generative Adversarial Networks (GANs) are a class of machine learning models used to generate new, realistic data by training two neural networks in competition with one another. This adversarial setup enables AI systems to create synthetic data, such as images,…

Model Drift refers to the gradual decline in a machine learning model’s performance over time as real-world data diverges from the data it was originally trained on. It can lead to inaccurate predictions, biased outcomes, and unreliable decision-making in production…

Generative AI is artificial intelligence that makes things. You give it a text prompt, it writes an essay. Describe an image, it draws one. It produces code, music, videos, and more. Traditional AI analyses data or makes predictions. Generative AI…

Model interpretability is the ability to understand how and why an artificial intelligence or machine learning model makes its predictions. It provides transparency into a model’s decision-making process — revealing which data features influenced the outcome and how they interacted…

A generative AI agent is not a chatbot. I know that sounds like a throwaway distinction, but it matters. A chatbot generates text when you ask it to. An agent reasons through a problem, picks actions, runs them, checks what…